Language and Computation in Neural Systems

Where we try to understand (model) the neural and cognitive basis of language processing.

The focus of our research group is to understand the computational principles and mechanisms that underlie the representation and processing of human language. Our aim is to develop a theory about how the brain generates human language that is based on principles from across the language sciences, the cognitive and computational sciences, and neuroscience—and to do so in a way that stays faithful to the constraints on neural computation, to the formal properties of language, and to human behavior (see also this recent People of Donders for more information).

Language is key to nearly all human activities, and is a defining human behavior. A fundamental question has shaped the study of language since its inception: Is our capacity for language built upon linguistic structure (e.g., grammar from Linguistics), or is it derived from the statistical patterns of language use (e.g., the probability of a sound or word given the preceding context)? The answer to this question matters because it determines what kinds of systems (viz., biological and artificial) can have language and has strong implications for the composition of the human mind.

In the Lise Meitner Research Group Language and Computation in Neural Systems, we study how the human mind encodes both the structure and statistics of language in neural dynamics, or rhythmic patterns of brain activity over time. We measure the effects of structure and statistics on neural dynamics during language processing, and construct computational models and theories of how the brain transforms sensory signals (e.g., speech, sign) into structured meaningful language, and returns language back into articulation in production. As the particular division of labor between structure and statistics varies by language and by behavioral context, we focus on collecting MEG data in a variety of languages in a range of behavioral contexts, including naturalistic listening and speaking, and using these dynamics to constrain models of language processing.

Contact

Andrea E. Martin

- More detailed information

-

Human language is a unique, formally-describable biological communication system in the natural world. Language behavior is not only key in everyday life, but is a hallmark of being human. Human language itself is highly adaptable and dynamic, yet requires stable and robust knowledge of linguistic structures and patterns.

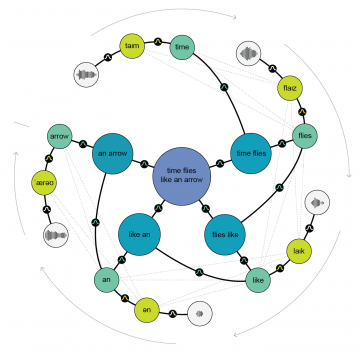

For our brains, this presents a rich computational problem–robustly representing linguistic knowledge (e.g., the speech or sign patterns, “words”, and grammar of your language) while using dynamic distributional information (e.g., when, how often, in what contexts linguistic units and structures occur) to flexibly perceive and produce language. How is linguistic knowledge, the structure and statistics of language, encoded in the brain? And specifically, how does population rhythmic activity, or neural dynamics, serve to encode this information?

The Lise Meitner Research group Language and Computation in Neural Systems investigates the mechanisms and computational principles that underlie the representation and processing of human language in the mind and brain. Our aim is to develop a theory about how the brain generates human language that is based on principles from across the language sciences, the cognitive and computational sciences, and neuroscience—and to do so in a way that stays faithful to the known constraints on neural computation, to the formal properties of language, and to human behavior.

We create theoretical models and computational implementations. Then, neuroscientific experiments are designed to validate our models. One of our key foci is on the role of “rhythmic computation” as a mechanism for symbolic representations in brain-like systems.

- Grants and awards

-

Max Planck Independent Research Group (2020-2024)

This 5-year program is the main funding to set up the "Language and Computation in Neural Systems" (Max Planck Society; 2020-2024).

2019 Aspasia Research Grant (Nederlandse Organisatie voor Wetenschappelijk Onderzoek (NWO))

2018 VIDI Research Grant (NWO, 2019-2024) “The rhythms of computation: A combinatorial mechanism for language production and comprehension"

- Shared Grants (co-PI or team leader)

-

2019 Language in Interaction Consortium: Big Question 5 (co-PI with Prof. Roshan Cools and team leader sub-questions 2 and 3; share = 50%) (NWO; 2019-2023)

2017 Research Grant (co-PI with Dr. Patrick Sturt; share = 50%) (The Leverhulme Trust, United Kingdom, 2017-2019) “Integration of Information in Reading"

Share this page